Redshift is a supported source & target for SAP Data Services 4.2 SP8. However, there isn’t much information available about utilizing Redshift with the use of SAP Data Services. Therefore, I decided to summarize my recent observations related to this subject.

Supported Version

According to the SAP Data Services 4.2 Product Availability Matrix, SP8 supports Redshift tables as a source & target, and it also comes with the built-in function ‘load_from_s3_to_redshift’.

You can try and use an earlier version of SAP Data Services (by using a generic ODBC connection). However, in earlier versions, SAP Data Services has severe issues with getting the correct table structure – columns exceeding 255 characters are automatically detected as LONG, which causes transformation issues. In addition, insert statements are not pushed-down to the DB layer and performance is extremely slow. Therefore, I would suggest avoiding this unsupported setup.

Connectivity

SAP Data Services 4.2 SP8 and up, provides the option to create a Redshift Datastore. This Datastore utilizes the Redshift ODBC Driver. You can import table structures and use the regular features that are available in data stores.

‘load_from_s3_to_redshift’ Function

Redshift is optimized to load data from S3 buckets. Therefore, SAP Data Services provides the ability to utilize this option with a built-in function. Essentially, what the ‘load_from_s3_to_redshift’ function does, is to generate a COPY command which is executed on the Redshift cluster. Therefore, you can use this function to push-down loading of files directly to the Redshift cluster.

Below is an example of the ETL function used to load a CSV file, and beneath it, is the actual COPY command which is generated and executed on Redshift:

| load_from_s3_to_redshift(‘<Redshift Datastore>’, ‘<Redshift Datastore>.<schema>.<table>’, ‘<Bucket Definition>’, ‘<filename>.csv’, ‘MAXERROR AS 0 DATEFORMAT AS \’auto\’ TIMEFORMAT AS \’auto\’ ACCEPTANYDATE TRIMBLANKS EMPTYASNULL BLANKSASNULL COMPUPDATE ON STATUPDATE ON ROUNDEC CSV DELIMITER \’,\’ ENCODING AS UTF8 ACCEPTINVCHARS AS \’?\’ IGNOREHEADER AS 1 IGNOREBLANKLINES FILLRECORD NULL AS \’\\N\”);

copy <schema>.<table> from ‘s3://<bucket_name>/<folder>/<filename>.csv’ credentials ” MAXERROR AS 0 DATEFORMAT AS ‘auto’ TIMEFORMAT AS ‘auto’ ACCEPTANYDATE TRIMBLANKS EMPTYASNULL BLANKSASNULL COMPUPDATE ON STATUPDATE ON ROUNDEC CSV DELIMITER ‘,’ ENCODING AS UTF8 ACCEPTINVCHARS AS ‘?’ IGNOREHEADER AS 1 IGNOREBLANKLINES FILLRECORD NULL AS ‘\N’ region ‘eu-central-1’; |

Performance Issues

SAP Data Services has performance issues related to Redshift. The main performance hit is related to the fact, that most transform operations are not pushed down to Redshift.

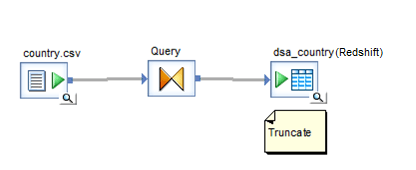

Loading files

Loading a CSV file without utilizing the function ‘load_from_s3_to_redshift’, resulted in extremely poor performance – It took 100 seconds to insert 1,000 records.

Loading the same file with ‘load_from_s3_to_redshift’, resulted in much faster performance – It took 10 seconds to insert 1,000 records.

| sql(‘Redshift’,’truncate table dsa.country’);

load_from_s3_to_redshift(‘Redshift’, ‘Redshift.dsa.country’, ‘S3_Bucket’, ‘country.csv’, ‘MAXERROR AS 0 DATEFORMAT AS \’DD-MON-YY\’ TIMEFORMAT AS \’auto\’ ACCEPTANYDATE TRIMBLANKS EMPTYASNULL BLANKSASNULL COMPUPDATE ON STATUPDATE ON ROUNDEC CSV DELIMITER \’,\’ ENCODING AS UTF8 ACCEPTINVCHARS AS \’?\’ IGNOREHEADER AS 1 IGNOREBLANKLINES FILLRECORD NULL AS \’\\N\”); |

The reason the CSV file load took much longer when not using the function, is because the CSV file must pass by the ETL server, and then the ETL server executes multiple insert statements to Redshift.

Please note that loading the same CSV file to other databases, usually performs much better, even though it too passes through the ETL server. For example, it took 2.6 seconds to load this file in-directly to an Oracle DB (with a similar setup).

Below is a comparison of the execution times:

| CSV Load Type | Execution time for 1,000 Rows |

| in-direct load to Redshift | 100 seconds |

| in-direct load to Oracle | 2.6 seconds |

| direct load from S3 to Redshift (‘load_from_s3_to_redshift’) | 10 seconds |

- In-direct load refers to a load process that passes by the ETL server.

Transforms

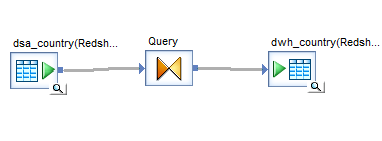

Executing a simple dataflow (retrieving data from a source Redshift table, to a target Redshift table), resulted in very good performance. This is because the whole operation was pushed down to the DB layer

Optimized SQL:

| INSERT INTO db.dwh_country (country_name , country_english_name , currency) SELECT substring( dsa_country.english_name , 1, 120) , substring( dsa_country.native_name , 1, 120) , ‘EUR’ FROM dsa.country dsa_country |

The problem of SAP Data Services with Redshift, is that the moment that you start to use functions in your transforms. The DML stops to be pushed-down to Redshift. This is true even with simple functions such as: to_char & to_decimal.

Instead it executes a SELECT statement, which retrieves the info, and the ETL server will then execute INSERT/UPDATE statements – all which seem to perform quite poorly.

Optimized SQL:

| SELECT substring( dsa_country.english_name , 1, 120) , substring( dsa_country.native_name , 1, 120) , ‘EUR’ FROM dsa.country dsa_country |

Below is a comparison of the execution time for these operations:

| SAP Data Services Operation | 1,000 Inserts | 1,000 Updates |

| Direct execution on Redshift | 1 second | 4 seconds |

| In-direct execution to Redshift | 64 seconds | 68 seconds |

| In-direct execution to Oracle | 1 second | 1 second |

- In-direct execution refers to a load process that passes by the ETL server.

The conclusion is that SAP Data Services does not push down most operations to Redshift, and as a result you will experience very bad performance in most of your ETL dataflows.

Workaround

The main issue is that most ETL operations from SAP Data Services, are not pushed-down to Redshift. Therefore, the workaround is to try and find a way, to push-down the DML operations to Redshift.

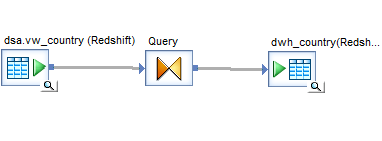

The easiest way to achieve this, is to create views which contain the required functions/transformations, and you import these views as tables into SAP Data Services.

Once these views are placed in a simple ETL dataflow, the whole DML operation will be pushed-down to Redshift and the performance will be good.

- Create view

create view dsa.vw_country

as

substring( dsa_country.english_name , 1, 120) as english_name, substring( dsa_country.native_name , 1, 120) as native_name, to_char(date,’YYYYMMDD’) as date_d, to_number(telephone,’999’) as prefix

from dsa.country dsa_country - Import view as a table into SAP Data Services and use it in a simple dataflow:

- The whole operation will be pushed-down to Redshift:

INSERT INTO db.dwh_country (country_name , country_english_name ,date_d, prefix)

SELECT english_name, native_name, date_d, prefix

FROM dsa.vw_country

This workaround, allows you to create SAP Data Services ETL, while still gaining the performance benefits of Redshift. However it does make the ETL process less intuitive and more cumbersome.

Conclusions & Suggestions

A more proper solution, would be to utilize an ETL tool which pushes most of the operations to Redshift. For this purpose, I recommend ‘Matillion ETL’, it is an ETL tool optimized for use with Redshift.

The reason it is optimized for use with Redshift, is that it allows you to create the same graphical ETL transformations, but it pushes most operations down to Redshift. Thus, it by-passes the ETL server and fully utilizes the performance benefits of Redshift. In addition, it provides ability to integrate to many databases and source systems.

Matillion can push-down most operations by creating many levels of nested sub-queries. This might not look pretty if you were to look at the DB execution. However, as the tool is rather graphical, you can choose to ignore these “ugly” queries and the result is a fast execution which meets your requirement.

Test Environment Details

Below are some technical details regarding the test environment used for this post:

- Redshift Cluster – Composed from two dc2.large nodes

- SAP Data Services 4.2 SP10 – Running on a t2.xlarge instance

- CSV file – A simple country definitions file which contains 1,000 rows with 7 columns